Today marks our first ship in the roadmap of building a decentralized temporary data storage network on top of Arweave and ao. Today’s milestone is translated by the first iteration of the future network, a beta release for Load S3, an object-storage temporal data storage layer with ~300TB of storage space available to be rented.

N.B: the current ~s3@1.0 is still subject to breaking changes and upgrades, also HyperBEAM’s edge branch development. That being said, Load S3 storage beta-release layer should be treated as an unstable testing layer. Check status at status.load.network.

Rationale

It’s not always clear upfront which data needs to be stored permanently. What will become invaluable later? What’s just noise? Noise doesn’t need to be stored for 200 years, and some compliance laws (healthcare-specific, GDPR) make it illegal to do so.

Sometimes permanence is overkill, sometimes it’s against regulations. Working inside the Arweave ecosystem since 2019, the feature request we’ve heard most often is temporary storage — a cache layer with hooks to Arweave, giving users flexibility over what ends up living forever.

Cost calculations get even more complex when you factor in data that might need compliance deletion. When a user exercises their right to be forgotten under GDPR, that data needs to disappear, not live immutably forever. Medical records past their legal retention period have the same constraint.

What developers, and even consumer cloud users, need is a bridge layer where data can live temporarily while you figure out its long-term value, with rails through to permanent storage when necessary. In this temporary layer, data maintains its cryptographic integrity and provenance (via ANS-104 DataItems) but doesn’t lock you into long-term storage costs until you’re certain.

This temporal approach enables new application architectures. Social media posts that automatically upgrade to permanent storage based on engagement metrics, or research datasets that live temporarily during peer review before being committed upon publication. The ability to defer the permanence decision while maintaining data integrity serves use cases that neither pure temporary storage nor immediate permanent storage can handle.

Load S3 fills this gap — giving you time to decide what deserves to live forever, without losing the cryptographic guarantees that make Arweave valuable.

That’s how Decent Land Labs co-founder, Ben, quoted WIRED’s post regarding cloud storage subscriptions, briefly outlining the importance of Load S3 layer and its built-in pipeline to Arweave’s permanent storage:

(Source)

Load S3 foundation

The ~s3@1.0 device

The Load S3 storage layer is built on top of HyperBEAM as a device, and ao network for data programmability. At its core, the ~s3@1.0 device – a HyperBEAM s3 object-storage provider – is the heart of the storage layer.

The HyperBEAM S3 device offers maximum flexibility for HyperBEAM node operators, allowing them to either spin up MinIO clusters in the same location as the HyperBEAM node and rent their available storage, or connect to existing external clusters, offering native integration between hb’s s3 and devs existing storage clusters. For instance, Load’s S3 device is co-located with the MinIO clusters.

In v0.1.0 of the s3_nif (aka s3 device), the device supports 8 S3 commands:

| Supported |

|---|

create_bucket |

head_bucket |

put_object (support expiry: 1-365 days) |

get_object (support range) |

delete_object |

delete_objects |

head_object |

list_objects |

Erasure-coded redundancy, fault tolerance, and data availability

Load S3’s MinIO cluster, forming the storage layer, runs on 4 nodes with erasure coding enabled. Data is split into data and parity blocks, then striped across all nodes. This allows the system to tolerate the loss of up to two nodes without data loss or service interruption. Unlike full replication, which stores complete copies of each object on multiple nodes, erasure coding provides redundancy with lower storage overhead, ensuring durability while keeping capacity usage efficient.

A four-node configuration also enables automatic data healing. When a failed node comes back online or a new node replaces it, missing blocks are rebuilt from the remaining healthy nodes in real-time, without taking the cluster offline. Object integrity is verified using per-object checksums, and data availability can be asserted using S3 metadata, such as size, timestamp, and ETag – ensuring each object is present, intact, and retrievable.

The Load S3 layer inherits these guarantees by offloading them to a battle-tested distributed object storage system, in this implementation, MinIO. In the future, the Load S3 decentralized network, consisting of multiple S3 HyperBEAM nodes, will have these properties available out of the box, without the need to re-engineer them from scratch.

~s3@1.0 & ANS-104 DataItems

The ~s3@1.0 device has been designed with a built-in data protocol to natively handle ANS-104 DataItems offchain temporary storage. This approach translates our rationale: HyperBEAM s3 nodes can store signed & valid ANS-104 DataItems temporarily, that can be pushed anytime, when needed, to Arweave, while maintaining the DataItem’s provenance and determinism (e.g. ID, signature, timestamp, etc).

Hybrid Gateway

Given the S3 device’s native integration with objects serialized and stored as ANS-104 DataItems, we considered DataItem accessibility, such as resolving via Arweave gateways.

Being an S3 device, we were able to benefit from HyperBEAM’s modular architecture, so we extended HyperBEAM’s gateway: we built the hb_gateway_s3.erl module and extended the hb_gateway_client.erl by integrating the hb_gateway_s3 store module as a fallback extension to the Arweave’s GraphQL API.

Additionally, hb_opts.erl Stores orders have been modified to add s3 offchain dataitems retrieval as a fallback after HyperBEAM’s cache module, Arweave gateway then S3 (offchain) – offchain DataItems should have the Data-Protocol : Load-S3 tag to be recognized by the subindex.

Building these extension components, a hb node running the ~s3@1.0 device, benefit from the Hybrid Gateway that can resolve both onchain and offchain dataitems.

Read more about the Hybrid Gateway here

Load S3 Trust Assumptions, Optimismo

In the current release, Load S3 is a storage layer consisting of a single centralized yet verifiable storage provider (HyperBEAM node running the ~s3@1.0 device components).

This early-stage testing layer offers similar trust assumptions offered by other centralized services in the Arweave ecosystem such as ANS-104 Bundlers. Load S3’s gradual evolution from a layer to decentralized network built on top of ao network will remove the centralized and trust-based components, one by one, to reach a trustless, verifiable and incentivized temporal data storage network.

Developer Guide

Load’s HyperBEAM node running the ~s3@1.0 device is available the following endpoint: https://s3-node-0.load.network – developers looking to use the HyperBEAM node as S3 endpoint, can use the official S3 SDKs as long as the used S3 commands are supported by ~s3@1.0

import { S3Client } from "@aws-sdk/client-s3";

const accessKeyId = "load_acc..."; // generate with cloud.load.network

const secretAccessKey = ""; // intentionally empty

const s3Client = new S3Client({

region: "eu-west-2",

endpoint: "https://api.load.network/s3",

credentials: {

accessKeyId,

secretAccessKey,

},

forcePathStyle: true,

});Access key creation is support on the Load Cloud Platform under the API Keys section. More on that here!

Integrations

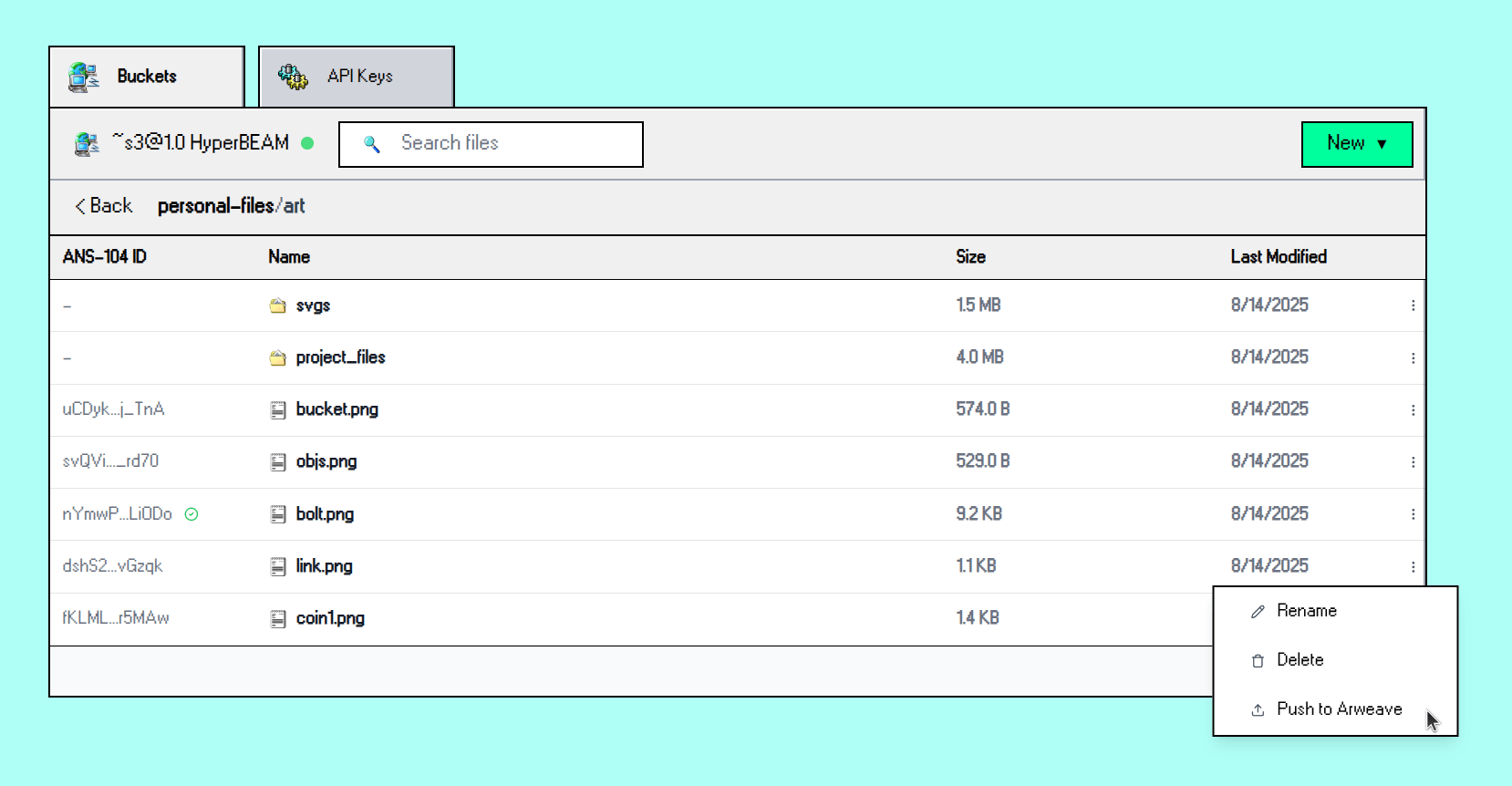

Load Cloud Platform

The Load S3 storage layer has been integrated within the Load Cloud Platform – LCP users can now create buckets, upload objects up to 250MB per object, and push offchain objects (with size <= 25MB) to Arweave via Turbo bundler.

AO data agents, a sneak peek

The Load S3 LCP integration has the dataitems “router” managed by data agents – the sneak peek TLDR is, we are developing an ao data agents framework, where the data pipeline between Load S3 and Arweave will be managed by AO processes (data agents), leveraging task-driven activation, HyperBEAM’s ~relay@1.0 device, and more smart options, stay tuned!

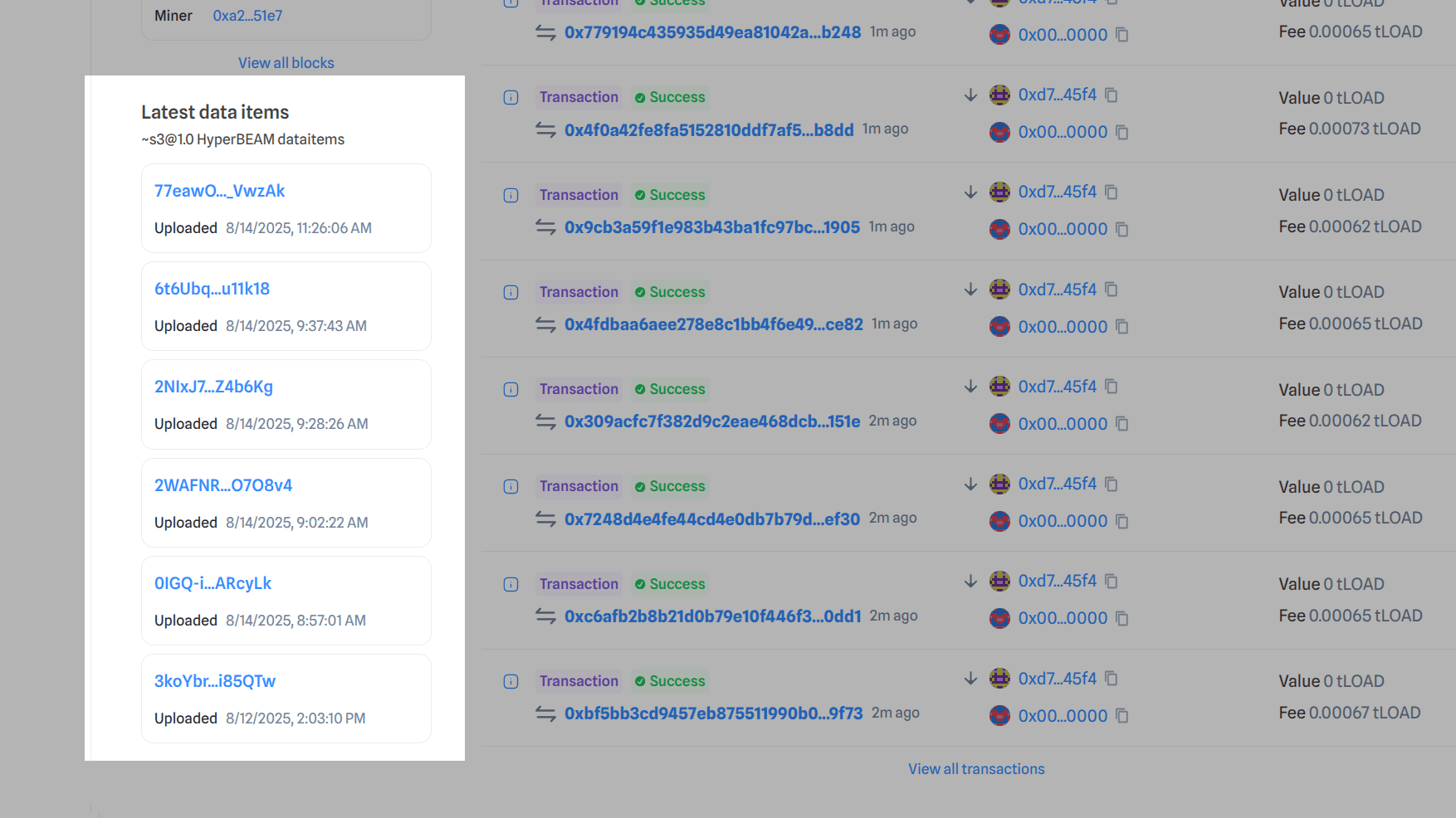

Load Network Explorer

Any object uploaded to s3-node-0.load.network is publicly accessible on the DataItems feed on the Load Network Explorer – therefore, take care with what you upload 👀

Coming soon: AO credit system

The access keys you can generate in cloud.load.network’s API Keys tab will work as both S3 secret keys and generic API keys for other Load Network tooling. To make this system accessible and decentralized, we will be rolling out an AO process-based credit system where users can buy storage and compute by depositing AO tokens ($AO itself and other ecosystem tokens) into the process. More news coming soon!

What’s next

The Load S3 HyperBEAM device is the first storage provider in the upcoming AO-powered temporary storage network. We were inspired to build a hot cache storage layer as a middle ground between regularly-purged EVM on-node storage and Arweave’s permanent cold backups.

Load S3 will become a native part of Load’s EVM stack, handling flexible blob storage and giving DA-related data a way to be easily verifiable before being committed to permanent storage.

As well as that, the S3 standard is the most widely adopted storage API in web2, making it the most friction-free route to onboard the next generation of builders to Arweave.